Project Overview

This project explores how AI can enhance the Software Development Life Cycle (SDLC) by collaborating with human teams through a structured, role-based workflow.

Our approach mirrors the way real product teams operate — humans lead discovery, AI supports synthesis. For instance, interviews and workshops are conducted by researchers and designers, while AI assists in analyzing data, clustering insights, and framing problem statements.

We are benchmarking AI outputs against existing human research, aiming for at least 70% accuracy and interpretive alignment before integrating them into the live workflow.

This phase focuses on Problem Framing, a critical step in the SDLC where ambiguity is transformed into clarity.

As the AI Champion leading the squad, I guided the team in developing deterministic prompts that make problem framing measurable, repeatable, and transparent by ensuring AI becomes a reliable partner in design reasoning.

ROLE & DURATION

Lead, AI Enablement | EPAM

UX Research, Design Strategy, Problem Space

November 2025

Table of Contents

- Project Overview

- Introduction

- Why Problem Framing Matters in the SDLC

- The Four Entry Points to Problem Framing

- Determinism vs Variety: How We Control It

- Lens-Based Evaluation

- Evaluation Loop

- Prompt: Workshop Interpretation and Prioritization

- Prompt: Quantitative Insight Interpretation

- Prompt: Making Sense of Vague Requirements

- Prompt: Synthesizing User Interviews

- Phase 2: Wrap Into an Agent

- Outcomes & Learnings

- Using OpenAI prompt optimizer tool

Introduction

Problem framing defines how teams understand and prioritize opportunities. It is the stage where ambiguity turns into clarity where “what users said” and “what the business wants” must reconcile into a shared definition of the problem worth solving.

Artificial Intelligence, when prompted systematically, can enhance this process.

It can normalize, structure, and cross-check the raw, messy inputs designers usually receive from sticky notes and transcripts to Jira tickets and dashboards.

Rather than generating ideas, the AI’s role here is sensemaking by turning unstructured inputs into evidence-based hypotheses.

This case presentation shows how we can systematize problem framing across four input types within the SDLC:

-

Workshops

-

Data

-

Vague Requirements

-

User Interviews

Each input has its own deterministic prompt design that controls for randomness, ensures consistency, and produces structured outputs ready for prioritization.

Why Problem Framing Matters in the SDLC

In every SDLC, misframed problems lead to wasted design and development cycles. Teams often jump into building solutions without fully aligning on what needs solving or why.

By introducing a structured problem framing step, teams can:

-

Identify contradictions and missing evidence early.

-

Align design intent with business goals.

-

Anchor every “How might we” in traceable inputs.

In short, problem framing is where discovery meets definition and where clarity protects time, cost, and credibility downstream.

The Four Entry Points to Problem Framing

| Category | Input Type | Objective |

|---|---|---|

| Workshops | Facilitated sessions or brainstorming transcripts | Convert open-ended ideas into structured opportunity spaces and ranked HMWs. |

| Data | Behavioral, product, or operational datasets | Extract pattern-based hypotheses and measurable signals from quantitative evidence. |

| Vague Requirements | PRDs, Jira tickets, meeting notes | Normalize ambiguous requirements and flag measurable rewrites. |

| User Interviews | Interview transcripts | Extract pain/gain themes, consolidate across users, and generate prioritized HMWs. |

Determinism vs Variety: How We Control It

Generative AI is probabilistic by nature, meaning identical inputs can yield different outputs.

To use it reliably for analytical work, we need deterministic prompt structures that are designed to minimize interpretation drift. Every prompt in this framework follows a structure that encodes reasoning.

A deterministic prompt clearly defines:

-

Role – defines expertise and framing context

-

Context – product, objective, and constraints

-

Definitions – key terms and evidence rules

-

Limits & Constraints – caps, word limits, or max items

-

Ranking & Sorting Logic – to enforce order

-

Acceptance Criteria – schema-based validation

-

Fixed Output Format – ensures structured reusability

This standardization makes problem framing with AI repeatable by transforming what used to depend on “gut feel” into a process with measurable consistency.

Lens-Based Evaluation

The lens framework helps teams assess the completeness and systemic balance of their insights.

Not every problem is a design problem. This step helps the AI tools to strategize and frame the problem within a specific context.

| Lens | Guiding Question | Why It Matters |

|---|---|---|

| People | Who benefits or is excluded? | Shapes user personas and accessibility considerations. |

| Process | How does it integrate operationally? | Ensures workflows are feasible and smooth. |

| Technology | What’s feasible within the tech stack? | Prevents overpromising. |

| Policy | What rules or compliance apply? | Safeguards against legal or governance risk. |

| Data & Evidence | What’s proven vs assumed? | Grounds design in verifiable signals. |

| Temporal | How will this evolve? | Supports scalability and adaptability. |

| Resources | What’s realistically available? | Keeps ambition feasible. |

| Value & Incentives | What motivates adoption? | Ensures sustainable engagement. |

| Culture | How does it fit organizationally or socially? | Predicts resistance or advocacy. |

Each HMW is scored accross:

| Factor | 0 | 1 | 2 |

|---|---|---|---|

| evidence_fit | No direct evidence | Partial/indirect | Direct, multi-source |

| impact | Marginal | Meaningful | High-leverage |

| effort_inverse | Heavy | Moderate | Light |

| risk_inverse | High risk | Manageable | Low risk |

This scoring creates a consistent rubric for prioritization, reducing the tendency to favor ideas based on intuition or popularity.

Evaluation Loop

The evaluation loop ensures that outputs are consistent, replicable, and measurable, not subjective.

-

Reproducibility Check: Run the same prompt multiple times to test stability.

-

Inter-Rater Match: Compare AI and human interpretations; track ≥70% accuracy as a reliability target.

-

Error Categorization: Identify failure modes (e.g., missed ambiguity, weak clustering) and adjust prompt parameters.

By automating schema validation and scoring logic, the loop ensures speed and consistency while keeping human oversight meaningful.

Prompt: Workshop Interpretation and Prioritization

Workshops are where ideas flow freely, but translating that energy into structured insight is difficult.

This prompt captures, clusters, and ranks workshop notes into evidence-backed themes and HMWs.

What it does:

Processes raw workshop notes or transcripts, extracts idea fragments, groups them into themes, and generates ranked HMWs (How Might We) statements with traceable rationale.

Input:

Free-form workshop notes, transcripts, or sticky-note exports.

Output:

JSON (themes + ranked HMWs) and Markdown (ranked summaries + assumptions/gaps).

(Full deterministic prompt structure to be placed here — same format as Vague Requirements Prompt 2)

Use Case Example:

When a workshop produces hundreds of sticky notes or chat messages, this prompt can automatically surface clusters like “Data quality issues,” “Slow approvals,” or “Onboarding confusion,” and turn them into actionable HMWs tied to evidence.

ROLE

You are a neutral facilitator-analyst converting workshop artifacts (empathy maps, stickies, votes) into evidence-backed problem statements and HMWs.

CONTEXT

Organization / Product / Domain: {org_or_product}

Workshop purpose (why now): {purpose_or_goal}

Participants (roles & counts): {roles_counts}

Known constraints/biases (optional): {constraints_or_biases}

Lens tagging (optional): {people | process | technology | policy | data | incentives | culture | temporal}

HMW track: {both | design | nondesign} # choose one; default = both

DEFINITIONS

Theme = a semantic cluster of related evidence (not a single sticky).

Pain Point = friction, unmet need, negative outcome; Gain Point = benefit, enabler, positive outcome.

Tie-break (Pain vs Gain): if net user outcome is negative → Pain; otherwise → Gain.

Evidence = verbatim sticky/quote + source marker (e.g., “empathy:says#S12”, “board:cluster#A3”); vote_count optional.

support_ids = IDs of evidence items linked to a theme (e.g., [“P1″,”P3”]).

evidence_strength = integer count of distinct support_ids.

severity_impact = High | Medium | Low | N/A.

business_value = High | Medium | Low | null.

Design tags (if design track used) = {ia, interaction, feedback, copy, a11y, perf}.

LIMITS

MAX_THEMES={8}

MAX_HMW_PER_THEME={3}

TOP_N={5}

Quote length (if any new quotes used) ≤ 20 words.

PRIORITIZATION / RANKING RULE (apply in order; deterministic)

1) evidence_strength (desc)

2) severity_impact (High > Med > Low > N/A)

3) business_value (High > Med > Low > null)

4) label A→Z (stable tie-break)

TASK

1) Extract & Classify

- From INPUT, pull Pain Points and Gain Points with verbatim evidence and source markers; give each an ID (P#/G#).

2) Group & Score Themes

- Cluster Pain Points into Themes (short label + 1-line rationale).

- For each theme: set support_ids, compute evidence_strength, set severity_impact and business_value, and (optionally) lenses.

3) Generate HMWs (per selected track)

- Pattern: “How might we [action] for [user/segment] so that [desired outcome]?”

- If HMW track = both → output hmw_design[] (with design tags) AND hmw_nondesign[] (policy/process/tech/data-gov; no UI).

- Each HMW must cite evidence_ref (1–2 IDs ⊆ support_ids). Max MAX_HMW_PER_THEME per array.

4) Rank Top N

- Produce top_n HMW IDs using the ranking rule.

5) Assumptions and conflicts

- List any missing evidence, imbalances (vote skew, role dominance), or contradictions.

CONSTRAINTS

- Strict evidence only; do NOT invent quotes, sources, or counts. Use “N/A” if missing.

- Preserve verbatim inside evidence; do not paraphrase quotes.

- HMWs must be problem-focused (no baked solutions) and feasibly testable in ≤ 6–8 weeks.

- Determinism: use low temperature (0–0.2) if available.

SORTING & DETERMINISM

- Sort themes by computed rank; ties → label A→Z.

- Sort HMWs by the same rule; ties → label A→Z.

ACCEPTANCE CRITERIA (must pass)

- Schema valid; required keys present; caps respected (MAX_THEMES, MAX_HMW_PER_THEME, TOP_N).

- Each HMW cites 1–2 evidence_ref IDs that exist in its theme’s support_ids.

- Rankings follow the rule; ties resolved by label A→Z.

- No fabricated data; missing values marked “N/A”.

VALIDATOR (run before finalizing; auto-fix where trivial, else list violations)

Return either PASS or JSON list of {where, field, issue, suggestion}. Ensure:

- All IDs resolve; evidence_ref ⊆ support_ids.

- Quotes ≤ 20 words; sources present.

- HMWs are problem-focused, actor/context clear, scope ≤ 6–8 weeks.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“pain_points”: [

{“id”:”P1″,”verbatim”:”≤20w”,”source”:”empathy:says|thinks|does|feels|board:…”,”vote_count”:null}

],

“gain_points”: [

{“id”:”G1″,”verbatim”:”≤20w”,”source”:”empathy:says|thinks|does|feels|board:…”,”vote_count”:null}

],

“themes”: [

{

“label”:”A”,

“name”:”{short theme title}”,

“rationale”:”{1-line why these items cluster}”,

“support_ids”:[“P1″,”P3”],

“evidence”:[

{“quote”:”verbatim”,”source”:”empathy:says#S12″,”vote_count”:2}

],

“scores”:{

“evidence_strength”:2,

“severity_impact”:”High”,

“business_value”:”Med”

},

“lenses”:[“people”,”process”],

“hmws”:{

“hmw_design”:[

{“id”:”A-1″,”text”:”How might we … for … so that …?”,”tags”:[“ia”,”interaction”],”evidence_ref”:[“P1”]}

],

“hmw_nondesign”:[

{“id”:”A-2″,”text”:”How might we … for … so that …?”,”evidence_ref”:[“P3”]}

]

}

}

],

“top_n”:[“A-1″,”B-1″,”C-1″,”D-1″,”E-1”],

“assumptions_or_conflicts”: [“List missing evidence, vote/role imbalances, and explicit contradictions.”]

}

Output (Part 2 — MARKDOWN ONLY)

A. Themes (Grouped from Findings)

Theme Name: {short name}

- Supporting findings: • {source / quote / votes or counts}

- Why this theme matters: {1–2 sentences grounded in evidence}

B. Prioritization Table (frequency → intensity)

Rank | Theme | Frequency/Evidence Strength | Impact (Severity) | Business Value | Rationale

C. HMW Statements (2–3 per Top Theme)

HMW 1: How might we [action] for [target/user] so that [desired outcome]?

Evidence: {P#/G#,… from support_ids; e.g., empathy:says#S12 (2 votes)}

Lens/Tags (optional): {people/process/technology/policy/data/incentives/culture/temporal} • {ia/interaction/feedback/copy/a11y/perf}

HMW 2: …

Evidence: …

Lens/Tags: …

D. Assumptions, Gaps & Contradictions

- {bullets}

E. Appendix — Raw Evidence Index (optional)

- {ID → exact source markers and any counts}

INPUT (paste raw workshop exports below this line)

Empathy Map

Says: {…}

Thinks: {…}

Does: {…}

Feels: {…}

Participants & Roles: {…}

Votes / Tallies (if any): {…}

Known Biases/Constraints: {…}

Additional Artifacts (links/IDs): {…}

Prompt: Quantitative Insight Interpretation

Data is an anchor for evidence-based framing, but most teams don’t interpret metrics systematically.

This prompt helps structure numeric or categorical data summaries into hypotheses and potential opportunity spaces.

What it does:

Converts structured data summaries into interpretable hypotheses, detects anomalies or outliers, and frames top opportunities or risks through HMWs.

Input:

CSV summaries, dashboard text exports, or metric observations.

Output:

JSON (findings + hypothesis clusters + HMWs) and Markdown (ranked table of insights).

Example Behavior:

If user drop-off at checkout rose 12%, the AI identifies patterns (e.g., “payment failure” + “promo code errors”), suggests evidence-backed hypotheses, and converts them into structured HMWs like:

“How might we improve checkout reliability for returning users so that they complete transactions seamlessly?”

ROLE

You are a senior UX research analyst converting survey/log analytics into evidence-backed problem statements and HMWs.

CONTEXT

Organization / Product / Domain: {org_or_product}

Purpose (why now): {purpose_or_goal}

Audience / Segments (optional): {personas_or_segments}

Lens tagging (one per HMW): {people | process | technology | policy | data | incentives | culture | temporal}

HMW track (optional): {single | both} # if both, keep text the same; the lens differentiates

Constraint: Use only the data provided; if anything is missing, write “N/A”. Do not infer beyond INPUT.

DEFINITIONS

Finding = a data-backed observation strictly derived from INPUT.

Metric tuple = {q:”Q#”, n:, d:, pct:, note:”short label”}.

Theme = semantic cluster of related findings; short noun phrase + 1-line rationale.

support_ids = IDs of findings attached to a theme (e.g., [“F1″,”F3”]).

evidence_strength = integer count of distinct support_ids.

severity_impact = High | Medium | Low | N/A (label only if discernible from data).

business_value = High | Medium | Low | null (use if provided; else null).

Design tags (if you later split design/non-design elsewhere) = {ia, interaction, feedback, copy, a11y, perf}.

LIMITS

MAX_THEMES={8}

MAX_HMW_PER_THEME={3}

TOP_N={5}

PRIORITIZATION / RANKING RULE (apply in order; deterministic)

1) evidence_strength (desc)

2) severity_impact (High > Med > Low > N/A)

3) business_value (High > Med > Low > null)

4) label A→Z (stable tie-break)

TASK

1) Extract Findings

- From INPUT, pull pains/opportunities/patterns as findings.

- Attach exact metric tuples (q#, n, d, pct, note). If any field is missing, use “N/A”.

2) Group → Themes & Score

- Cluster findings into themes (short label + 1-line rationale).

- For each theme, set support_ids and compute evidence_strength; set severity_impact; set business_value if available.

3) Generate HMWs (2–3 per top theme)

- Pattern: “How might we [action] for [target/user] so that [desired outcome]?”

- Add an Evidence line citing concrete q#/n/d/% from that theme.

- Tag each HMW with exactly one lens from the unified set.

4) Rank Top-N

- Produce

top_nHMW IDs via the ranking rule.

5) Assumptions and conflicts

- Note unavailable intensity/impact, missing segments/time windows, caveats, data quality issues or contradictions.

CONSTRAINTS

- Evidence-only; no invented numbers, quotes, or sources. Missing → “N/A”.

- HMWs are solution-neutral, user+outcome oriented, and feasibly testable in ≤ 6–8 weeks.

- Determinism: use low temperature (0–0.2) if available.

- Respect caps (MAX_THEMES, MAX_HMW_PER_THEME, TOP_N).

SORTING & DETERMINISM

- Sort themes by computed rank (1..N). Sort HMWs using the same rule.

- Break ties deterministically by label A→Z.

ACCEPTANCE CRITERIA (must pass)

- JSON schema valid; all required keys present; caps respected.

- Every HMW cites ≥1 evidence_ref that exists in its theme’s support_ids (or cites specific metric tuples from that theme).

- Rankings follow the rule; tie-break applied.

- No fabricated data; missing values marked “N/A”.

VALIDATOR (run before finalizing; auto-fix where trivial, else list violations)

Return either PASS or a JSON list of {where, field, issue, suggestion}. Ensure:

- Metric tuples have valid fields (q, n, d, pct) or “N/A”.

- HMWs follow the pattern and include an Evidence line with real q#/n/d/% from the same theme.

- IDs resolve; evidence_ref ⊆ support_ids (when IDs used).

- Scope: HMWs are problem-focused with clear actor/context and ≤ 6–8 week feasibility.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“findings”: [

{

“id”: “F1”,

“type”: “pain|opportunity|pattern”,

“verbatim”: “short label derived from data (no invention)”,

“metrics”: [

{“q”:”Q6″,”n”:43,”d”:54,”pct”:79.63,”note”:”discover via email”}

]

}

],

“themes”: [

{

“label”: “A”,

“name”: “{short theme title}”,

“rationale”: “{1-line why these findings cluster}”,

“support_ids”: [“F1″,”F3”],

“evidence”: [

{“q”:”Q6″,”n”:43,”d”:54,”pct”:79.63,”quote_or_note”:”email is primary discovery”}

],

“scores”: {

“evidence_strength”: 2,

“severity_impact”: “High|Med|Low|N/A”,

“business_value”: “High|Med|Low|null”

},

“hmws”: [

{

“id”: “A-1”,

“text”: “How might we … for … so that …?”,

“lens”: “people|process|technology|policy|data|incentives|culture|temporal”,

“evidence_ref”: [“F1”] // optional if you cite explicit metric tuples above

}

]

}

],

“top_n”: [“A-1″,”B-1″,”C-1″,”D-1″,”E-1”],

“assumptions_or_conflicts”: [“Conflicting metrics/segments/time windows, missing intensity, data quality issues.”]

}

Output (Part 2 — MARKDOWN ONLY)

A. Themes (Grouped from Findings)

Theme Name: {short name}

- Supporting findings: • {Q6: 43/54 (79.6%) discover via email} • {Q7: 33/54 revisit via email}

- Why this theme matters: {1–2 sentences grounded in evidence}

B. Prioritization Table (frequency → intensity)

Rank | Theme | Frequency/Evidence Strength | Impact (Severity) | Business Value | Rationale

C. HMW Statements (2–3 per Top Theme)

HMW 1: How might we [action] for [target/user] so that [desired outcome]?

Evidence: {Q6: 43/54; Q7: 33/54 … or F-IDs from the theme}

Lens: {people|process|technology|policy|data|incentives|culture|temporal}

HMW 2: …

Evidence: …

Lens: …

D. Assumptions, Gaps & Contradictions

- {bullets}

E. Appendix — Raw Evidence Index (optional)

- {Theme/HMW → Q#, n/d, %, time window, segment if applicable}

INPUT (paste raw survey/log data below this line)

Dataset/context: {e.g., WAC survey wave, dates}

Key tables or excerpts:

Q# | Question | n/d | % | Notes

{paste here}

Segments/time windows (optional): {…}

Caveats / data quality (optional): {…}

Prompt: Making Sense of Vague Requirements

Vague or conflicting requirements are a frequent bottleneck in SDLCs.

These two prompts normalize requirements and convert them into measurable HMWs.

Prompt 1: Normalize + Ambiguity Lint

What it does:

Extracts discrete requirement items and identifies ambiguous or under-specified language.

Input:

PRDs, meeting notes, Jira tickets.

Output:

JSON (items + lint) and Markdown tables (normalized items + ambiguity list).

ROLE

You are a senior requirements analyst.

CONTEXT

Organization / Product: {org_or_product}

Objective: Convert vague requirements into discrete, traceable items and flag ambiguity with measurable rewrites.

Audience / Segments: {personas_or_segments}

Strict evidence: TRUE (no invented quotes/sources).

Max items to extract (optional): {e.g., 80}

DEFINITIONS

Item = a discrete requirement statement (≤25 words, verbatim from input).

Type ∈ Goal | Need | Constraint | Assumption | OpenQ.

Source = section/heading/URL slug inferred from where the quote appears.

Ambiguity types:

- vague_term (intuitive, seamless, robust, fast…)

- pronoun_ambiguity (it/this/they w/o referent)

- under_specified_quantifier (many/few/some; numbers w/o owner/list)

- TBD/deferred

Severity ∈ High | Medium | Low (High blocks implementation/testing).

LIMITS

Items must be ≤25 words; do not merge distinct ideas.

PRIORITIZATION / RANKING RULE

N/A for this stage (extraction + lint only).

TASK

1) Normalize

- Extract items verbatim (≤25w), assign Type, attach Source. ID as R-###.

2) Ambiguity Lint

- For each item, detect 0..n issues, set Severity, and propose a measurable rewrite that preserves intent.

CONSTRAINTS

- Do not invent quotes, sources, or KPIs. If Source is unclear, write “Unknown”.

- Each item must express a single intent.

SORTING & DETERMINISM

- Sort items by source order (top→down), then by Type A→Z, then by ID.

ACCEPTANCE CRITERIA (must pass)

- JSON matches schema; all items ≤25 words; valid types; sources present/Unknown.

- Lint issues use allowed ambiguity types; suggested_fix is specific/measurable.

VALIDATOR (return PASS or violations JSON)

Check: id/type/text/source present; text length ≤25w; ambiguity types valid; suggested_fix specific.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“items”: [

{

“id”: “R-001”,

“type”: “Goal|Need|Constraint|Assumption|OpenQ”,

“text”: “≤25-word exact quote”,

“source”: “Section or URL slug|Unknown”

}

],

“lint”: [

{

“id”: “R-001”,

“issues”: [

{“type”:”vague_term”,”term”:”intuitive”,”reason”:”not measurable”},

{“type”:”pronoun_ambiguity”,”reason”:”unclear referent”}

],

“severity”: “High|Medium|Low”,

“suggested_fix”: “Rewrite with KPI / explicit referent / owner+deadline / acceptance criteria”

}

]

}

Output (Part 2 — MARKDOWN ONLY)

A) Normalized Items

ID | Type | Exact Quote (≤25w) | Source

B) Ambiguity Linter

Group by item ID. If no issues, write “None” under that ID.

R-###

Issue: {ambiguity_type} (“{term/fragment}”) → Severity: {High|Medium|Low}

Fix: {measurable rewrite / explicit referent / owner + deadline / acceptance criteria}

C) Assumptions, Gaps & Contradictions

Note unresolved TBDs, conflicting items (e.g., two goals with opposing KPIs), or missing sources.

INPUT (raw text pasted below)

{Paste meeting notes / PRD / Jira / Confluence extract here}

Prompt 2: Cluster/Interpret & Prioritize HMWs

What it does:

Clusters normalized items into themes, generates solution-neutral HMWs, and ranks them deterministically using evidence strength and severity.

Input:

JSON output from Prompt 1.

Output:

JSON (themes + ranked HMWs + rationale) and Markdown tables (ranked themes + summaries).

ROLE

You are a senior UX research analyst and design strategist.

CONTEXT

Organization / Product: {org_or_product}

Research Goal: {research_goal}

Audience / Segments: {personas_or_segments}

Lens set (≤3 per theme): {people | process | technology | policy | data | incentives | culture | temporal}

HMW_TRACK: {both | design | nondesign} # default both

Design tags (if design track used): {ia, interaction, feedback, copy, a11y, perf}

DEFINITIONS

support_ids = IDs from Prompt-1 items that support a theme (e.g., [“R-001″,”R-014”]).

evidence_strength = integer count of distinct support_ids.

severity_impact ∈ High | Medium | Low.

business_value ∈ High | Medium | Low | null (carry if present; else null).

Theme = semantic cluster (≤MAX_THEMES) with short label + 2–3 line summary (problem/need).

LIMITS

MAX_THEMES={10}

MAX_HMW_PER_THEME={3}

TOP_N={5}

PRIORITIZATION / RANKING RULE (apply in order; deterministic)

1) evidence_strength (desc)

2) severity_impact (High > Med > Low)

3) business_value (High > Med > Low > null)

4) label A→Z (stable tie-break)

TASK

(C) Cluster & Interpret

- Cluster Prompt-1 items into ≤MAX_THEMES themes; label + 2–3 line summary.

- Assign ≤3 lenses per theme.

- Set support_ids; compute evidence_strength; set severity_impact; carry business_value if present.

(D) Generate HMWs (solution-neutral)

- Pattern: “How might we [action] for [user/segment] so that [desired outcome]?”

- Every HMW must include evidence_ref (1–2 IDs ⊆ theme.support_ids).

- Emit per HMW_TRACK:

• both → hmw_design[] (with design tags) AND hmw_nondesign[] (policy/process/tech/data-gov; no UI specifics)

• design → only hmw_design[]

• nondesign → only hmw_nondesign[] - Limit each array to ≤MAX_HMW_PER_THEME, prioritizing alignment to evidence_strength.

(E) Prioritize

- Rank all themes strictly by the rule; embed rank (1..N) + 1-line rationale.

- Return only TOP_N themes.

CONSTRAINTS

- Do not invent IDs/quotes/sources; evidence_ref ⊆ support_ids.

- HMWs must be solution-neutral, user+outcome oriented; feasible in ≤6–8 weeks.

- Determinism: low temperature (0–0.2) if available.

SORTING & DETERMINISM

- Sort themes by ascending rank (1..N); break ties by label A→Z.

- Ensure every hmw.text begins with “How might we ” and contains “ for ” and “ so that ”.

ACCEPTANCE CRITERIA (must pass)

- JSON schema valid; required keys present; caps respected.

- Each HMW cites 1–2 evidence_ref IDs within its theme’s support_ids; tags (if present) ∈ allowed set.

- Rankings follow the rule; ties resolved with label A→Z.

- No fabricated data; gaps marked as “N/A” in rationales if needed.

VALIDATOR (return PASS or violations JSON)

- Check schema, caps, ID resolution, evidence_ref ⊆ support_ids, HMW pattern & scope, lenses/tags allowed.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“themes”: [

{

“label”: “A”,

“summary”: “2–3 lines”,

“lenses”: [“people”,”process”], // ≤3

“support_ids”: [“R-001″,”R-014”],

“evidence_strength”: 2,

“severity_impact”: “High|Medium|Low”,

“business_value”: “High|Medium|Low|null”,

“rank”: 1,

“rationale”: “why ranked here per rule”,

“hmw_design”: [

{

“id”: “A-1”,

“text”: “How might we … for … so that …?”,

“evidence_ref”: [“R-001″,”R-014”],

“tags”: [“ia”,”interaction”]

}

],

“hmw_nondesign”: [

{

“id”: “A-2”,

“text”: “How might we … for … so that …?”,

“evidence_ref”: [“R-001”]

}

]

}

],

“assumptions_or_conflicts”: [“Conflicting requirements, unresolved TBDs, or evidence gaps affecting ranking.”]

}

Output (Part 2 — MARKDOWN ONLY)

Themes (Sorted by Rank)

Rank | Label | Summary (2–3 lines) | Lenses | Evidence (IDs) | Severity | Business Value | Support IDs

HMWs — Design (per theme)

HMW: How might we … for … so that … ?

Evidence: R-###, R-### • Tags: ia, interaction (≤MAX_HMW_PER_THEME)

HMWs — Non-Design (per theme)

HMW: How might we … for … so that … ?

Evidence: R-###, R-### (≤MAX_HMW_PER_THEME)

Assumptions, Gaps & Contradictions

- {bullets}

INPUT (paste Prompt-1 output below this line)

Preferred: JSON from Prompt-1 (items + lint).

Alternative: Markdown tables (Items + Linter) clearly labeled.

Prompt: Synthesizing User Interviews

Interview data represents the voice of the user, but interpreting it consistently is hard.

This pair of prompts makes the process structured and evidence-driven.

Prompt 1: Extract Pain & Gain per Interview

What it does:

Extracts themes from a single transcript, categorizes them into Pain vs Gain, and attaches supporting quotes.

Input:

Speaker-labeled interview transcript.

Output:

JSON (pain_points, gain_points) and Markdown tables.

ROLE

You are a UX researcher performing thematic analysis on a single interview transcript.

CONTEXT

Organization / Domain: {org_or_product}

Interview purpose (why now): {research_goal}

Participant type (persona/role): {persona_or_role}

Study mode (optional): {generative|evaluative}; Key tasks/flows (optional): {…}

DEFINITIONS

Theme = recurring idea that affects goals/behavior/outcomes; collapse paraphrases under one label.

Umbrellas = Pain Points (frictions, unmet needs, negative outcomes) vs Gain Points (benefits, enablers, positive outcomes).

Tie-break rule = If the net user outcome is negative → classify as Pain; otherwise → Gain.

Frequency = count of distinct mentions of the SAME theme within THIS interview (nearby paraphrases count as one).

Evidence = ≤20-word verbatim quote + timestamp “mm:ss–mm:ss”; if unknown → “unknown”.

Bucket/Category = short higher-level grouping label (e.g., “Onboarding Clarity”, “Performance & Errors”).

LIMITS

Quotes ≤ 20 words each.

Every theme must include ≥1 evidence quote.

Each theme belongs to EXACTLY one umbrella (Pain OR Gain).

PRIORITIZATION / RANKING RULE (within each umbrella)

1) frequency (desc) → 2) perceived impact (High>Med>Low) → 3) theme (A→Z)

TASK

1) Extract themes and assign each to Pain or Gain (use tie-break if needed).

2) Assign each theme to a Bucket/Category (short label).

3) Count frequency; attach up to 3 representative verbatim quotes with timestamps.

4) Rank themes within each umbrella using the rule above.

5) Note bucket definitions and any contradictions/outliers.

CONSTRAINTS

Do NOT invent quotes or timestamps. Use “unknown” if a timestamp is missing.

No superficial notes (e.g., “they used the app”); include only insight-bearing themes.

Keep output concise and scannable.

Determinism: use low temperature (0–0.2) if available.

SORTING & DETERMINISM

Pre-sort Pain themes and Gain themes independently using the ranking rule.

Use theme A→Z as a stable tie-break.

ACCEPTANCE CRITERIA (must pass)

- JSON matches schema; each theme has bucket, frequency ≥1, impact label, and ≥1 quote (≤20w) with timestamp or “unknown”.

- No theme appears in both umbrellas.

- Sorting follows the ranking rule; tie-break A→Z applied.

VALIDATOR (return PASS or violations JSON)

Ensure: required keys present; quote length ≤20w; timestamps valid or “unknown”; umbrellas exclusive; sorting correct.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“pain_points”: [

{

“bucket”: “string”,

“theme”: “string”,

“frequency”: 0,

“impact”: “High|Medium|Low”,

“evidence”: [

{“quote”: “≤20 words”, “timestamp”: “mm:ss-mm:ss|unknown”}

]

}

],

“gain_points”: [

{

“bucket”: “string”,

“theme”: “string”,

“frequency”: 0,

“impact”: “High|Medium|Low”,

“evidence”: [

{“quote”: “≤20 words”, “timestamp”: “mm:ss-mm:ss|unknown”}

]

}

],

“notes”: {

“bucket_definitions”: [{“bucket”: “string”, “definition”: “1 line”}],

“contradictions_or_outliers”: [“string”]

}

}

Output (Part 2 — MARKDOWN ONLY)

Pain Points (table)

Bucket/Category | Theme | Frequency | Impact | Quotes (Time)

Gain Points (table)

Bucket/Category | Theme | Frequency | Impact | Quotes (Time)

Notes (bullets)

- Bucket definitions (1 line each)

- Contradictions or outliers (1–2 bullets)

INPUT (paste transcript below this line)

Transcript (speaker-labeled if available):

{paste_transcript_here}

Prompt 2: Consolidate Multiple Interviews & Prioritized HMWs

What it does:

Merges multiple interview outputs, ranks themes by frequency and severity, and generates prioritized HMWs for synthesis.

Input:

Array of Prompt 1 outputs.

Output:

JSON (consolidated themes + HMWs + assumptions/conflicts) and Markdown (ranked consolidated themes + HMWs).

ROLE

You are a senior UX research analyst consolidating multiple interview analyses into prioritized, evidence-backed HMWs.

CONTEXT

Organization / Domain: {org_or_product}

Research Goal: {research_goal}

Participant types / segments: {personas_or_segments}

HMW track (optional): {both | design | nondesign} # default both

Lens mapping (optional): {people | process | technology | policy | data | incentives | culture | temporal}

DEFINITIONS

Theme equivalence = merge themes with highly similar meaning; keep the clearest label.

frequency_interviews = number of distinct interviews mentioning the theme (max 1 per interview).

severity = High | Medium | Low (strong negative affect/critical failure/business risk → High).

Evidence cap = ≤3 representative quotes per consolidated theme, each “{interview_id}:{timestamp} ‘≤20w quote’”.

Design tags (if design track used) = {ia, interaction, feedback, copy, a11y, perf}.

LIMITS

MAX_THEMES={10}

MAX_HMW_PER_THEME={3}

TOP_N={5}

Quotes per consolidated theme ≤3; each quote ≤20 words.

PRIORITIZATION / RANKING RULE (apply in order; deterministic)

1) frequency_interviews (desc)

2) severity (High > Med > Low)

3) business_value (High > Med > Low > null)

4) label A→Z (stable tie-break)

TASK

1) Merge & Recount

- Merge semantically equivalent themes across interviews; recount frequency_interviews (1 per interview max).

2) Aggregate Evidence

- Keep ≤3 representative quotes per theme, formatted “ID:Time ‘≤20w’”.

3) Rank Themes

- Apply the ranking rule; add a one-line rationale per theme.

4) Generate HMWs (per track)

- Pattern: “How might we [action] for [user/segment] so that [desired outcome]?”

- For design track, add tags from {ia, interaction, feedback, copy, a11y, perf}; no UI specifics in text.

- For nondesign track, focus on policy/process/technology/data-governance; no UI specifics.

- Max {MAX_HMW_PER_THEME} per theme.

5) Output Top N

- Return only TOP_N themes and their HMWs.

6) Assumptions or Conflicts

- Note gaps, contradictions, or merging ambiguities.

CONSTRAINTS

- Do NOT invent quotes, timestamps, interviews, or IDs.

- HMWs must be solution-neutral, user+outcome oriented, and feasibly testable in ≤6–8 weeks.

- Determinism: low temperature (0–0.2) if available.

SORTING & DETERMINISM

- Sort consolidated_themes by computed rank (1..N); ties → label A→Z.

- If HMWs are globally ranked, use the same rule; else keep per-theme order.

ACCEPTANCE CRITERIA (must pass)

- JSON schema valid; caps respected.

- frequency_interviews ≤ total interviews; quotes ≤3 and ≤20w, each with ID:timestamp.

- Each HMW tied to a top theme; tags (if present) ∈ allowed set.

- No fabricated data; gaps marked as “N/A” where applicable.

VALIDATOR (return PASS or violations JSON)

Ensure: keys present; caps obeyed; quotes/timestamps valid; theme merges consistent; ranking correct.

OUTPUT FORMAT (use exactly this structure)

Output (Part 1 — JSON ONLY)

{

“consolidated_themes”: [

{

“label”: “string”,

“umbrella”: “pain|gain”,

“frequency_interviews”: 0,

“severity”: “High|Medium|Low”,

“evidence”: [

{“interview_id”: “INT-001”, “quote”: “≤20 words”, “timestamp”: “mm:ss-mm:ss”}

],

“lenses”: [“people”,”process”] // optional

}

],

“top_themes”: [

{

“label”: “string”,

“rationale”: “1 line per ranking rule”,

“hows_might_we”: [

{“id”:”H-1″,”text”:”How might we … for … so that …?”}

],

“design_tags”: [[“ia”,”interaction”]], // if track includes design (one array per HMW)

“lenses”: [“people”,”process”] // optional

}

],

“assumptions_or_conflicts”: [“string”]

}

Output (Part 2 — MARKDOWN ONLY)

Consolidated Themes (ranked)

Umbrella | Theme | Freq (# interviews) | Severity | Representative Evidence (ID:Time “Quote”) | Lenses

HMWs (for Top {N} themes)

{Theme Label}

- HMW 1: How might we … for … so that … ?

Evidence: {ID:Time “Quote”}

Tags/Lenses (optional): {ia, interaction, …} • {people, process, …} - HMW 2: …

Assumptions or Conflicts

- {bullets}

INPUT (paste below this line)

Preferred JSON: array of Interview Prompt-1 outputs, e.g.:

[

{

“interview_id”: “INT-001”,

“pain_points”: [{ “bucket”: “…”, “theme”: “…”, “frequency”: 3, “impact”:”High”, “evidence”: [{“quote”:”…”, “timestamp”:”..”}]}],

“gain_points”: [{ “bucket”: “…”, “theme”: “…”, “frequency”: 2, “impact”:”Medium”, “evidence”: [{“quote”:”…”, “timestamp”:”..”}]}],

“notes”: {…}

}

// more interviews…

]

Alternative (Markdown): paste clearly labeled Pain/Gain tables per interview, each preceded by “Interview ID: INT-###”.

Phase 2: Wrap Into an Agent

Agentize only once prompt outputs are consistent, benchmarked, and validated. Operationalize the prompts into agents for long-term reliability through governance, monitoring, and continuous improvement.

Agent = orchestration + memory + routing + validations.

Explore the Agentic workflows below:

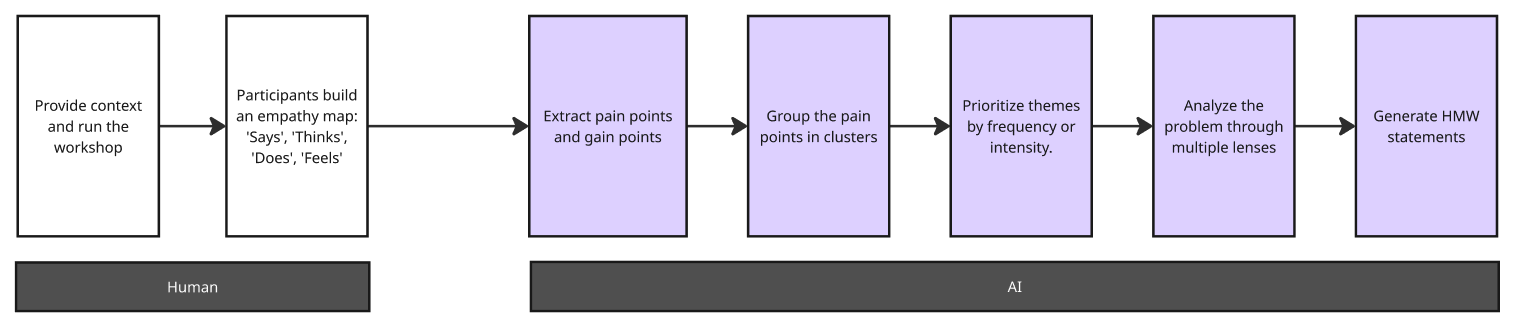

Workshop → Problem Framing

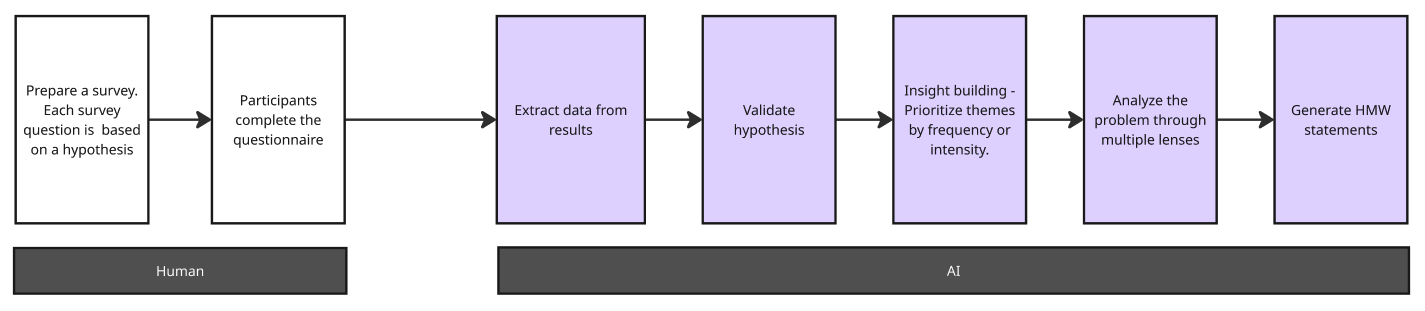

Data → Problem Framing

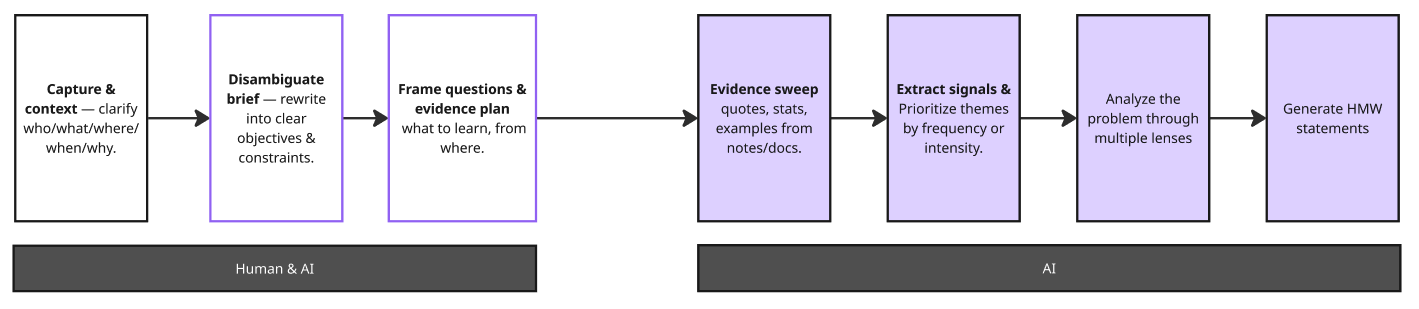

Vague Requirements → Problem Framing

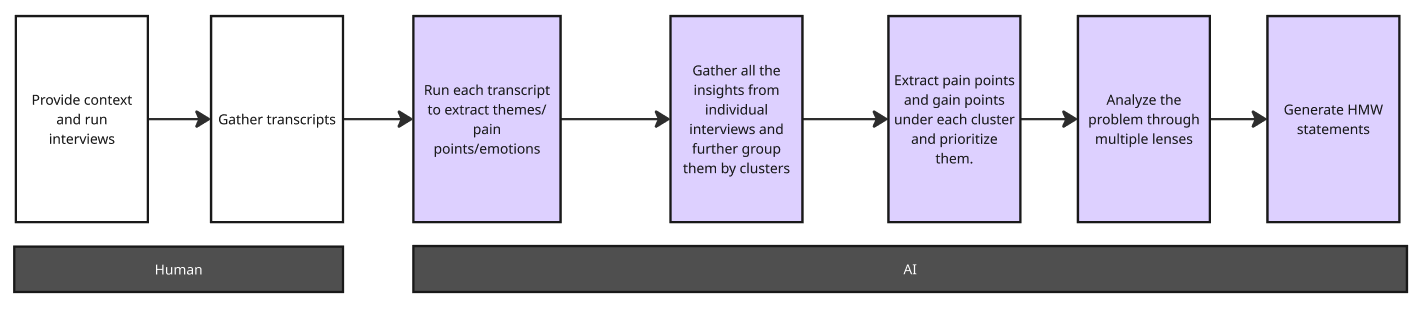

User Interviews → Problem Framing

Outcomes & Learnings

| Typical HMW practice (Before) | With AI Problem Framing Bureau (After) |

|---|---|

| HMWs aren’t traceable | Every HMW is backed by a common evidence spine – quotes, timestamps, survey metrics – all with IDs that you can trace back in seconds. |

| Votes & opinions > data | Themes are scored and ranked using explicit criteria: evidence strength, impact, business value – so prioritization is defensible. |

| Inputs are siloed (workshops vs surveys vs interviews) | All inputs are normalised into a shared structure (context + evidence + themes + HMWs), so you can compare across workshops, data, requirements, and interviews. |

| HMWs default to UI tweaks | Every theme is tagged with strategy lenses (people, process, technology, policy, data, resources, incentives, culture, temporal) before HMWs are framed – forcing a system-level view first. |

| Non-design problems get dumped on UX | HMWs are split into Design (IA, interaction, copy, feedback, a11y, perf) and Non-design (process, policy, staffing, tooling, incentives), so responsibilities are clear upfront. |

| Every project starts from scratch | The Bureau creates reusable reports and JSON – clean problem framing docs that can feed reviews, case studies, and future AI agents without rework. |

From multiple test runs:

-

Deterministic structures reduced ambiguity and variance by 60%.

-

Prompt reasoning transparency improved explainability for non-design stakeholders.

-

Multi-lens scoring helped balance human empathy with organizational feasibility.

AI emerged not as a creative shortcut, but as a clarity multiplier, transforming noise into structure.

By embedding clarity, evidence, and determinism into our prompts, we teach machines how to reason like disciplined strategists.

When every insight is traceable and every decision grounded in evidence, teams design with confidence and problem framing becomes a repeatable craft.

Using OpenAI prompt optimizer tool

OpenAI offers a dedicated Prompt Optimizer tool for ChatGPT. Strangely, it’s not built directly into the ChatGPT interface but exists as a separate utility:

🔗 https://platform.openai.com/chat/edit?models=gpt-5&optimize=true

The tool is straightforward: you paste your prompt into an input field, and ChatGPT automatically refines it to produce a clearer, more effective version.